Volcengine Develops Its Own "Tokenomics"

Behind the scale of 50 trillion tokens processed per day.

Token, Token, and more Tokens.

At the FORCE Conference · Spring on December 18, Volcengine President Tan Dai mentioned “Token” 18 times during his 30-minute opening keynote, while “Cloud” appeared only seven times. Volcengine also updated the highly anticipated daily Token usage figures for its Doubao Foundation Model:

50 Trillion.

This metric has grown 417 times since its initial launch and more than 10 times compared to last December. For context, Google’s latest monthly Token data from October, when converted to a daily average, stands at 43 trillion. Doubao’s invocation volume is now number one in China and third globally. According to an IDC report, Volcengine also ranks first in China for public cloud LLM service usage, with its Model-as-a-Service (MaaS) market share rising from 46.4% in 2024 to 49.2% this year. “In other words, for every two Tokens generated on China’s public clouds, one is produced by Volcengine,” Tan Dai told the 5,000-strong audience.

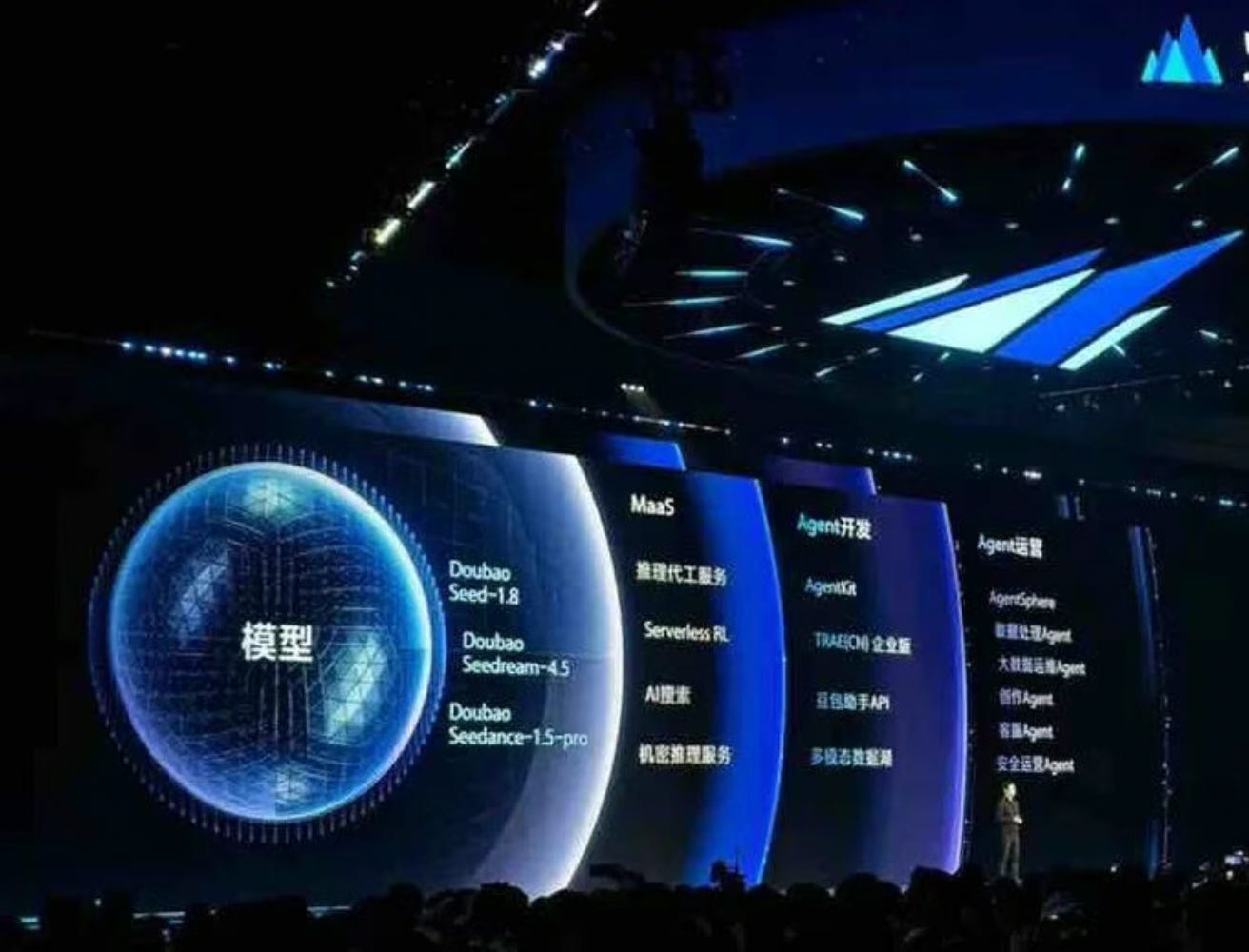

During the event, Volcengine followed its tradition of announcing the latest model progress from ByteDance. The Doubao Model 1.8 was officially unveiled, showing significant improvements across multiple benchmarks. It continues the “All-in-One” technical route, evolving text, code, Agent, audio, image, and video capabilities within a single foundation model. Simultaneously, Seedance 1.5 Pro, specialized for video generation, was launched, achieving “world-leading levels” in lip-syncing and processing Chinese dialects.

The message from ByteDance is clear: Doubao is creating Tokens, and its evolution mirrors the structural shift in Token consumption—from “inference replacing training” to today’s era where multimodal content and Agents are the primary drivers. These demands can all be met by Volcengine’s services. Behind this rapid growth, Volcengine is establishing its own system of Tokenomics.

1. More Tokens Represent More Intelligence

A Token is the fundamental unit of information processing for large models. Whether it is text, images, or video, everything is converted into Token sequences for computation. Essentially, Token volume represents the frequency of AI usage. However, a question arises: since Token calculation is based on length, a long article and a short piece of critical code might consume a similar number of Tokens. Can pure Token count truly reflect the value created by AI?

Algorithm experts at Volcengine recalled internal debates on this topic. The final conclusion was: Token volume is definitely the right metric. “For AI to generate value in real-world scenarios, people must use it. Regardless of whether the unit value is large or small, it is fundamentally correlated with Token usage. Your final key decision might only be a ‘Yes’ or ‘No’ (a single Token), but reaching that conclusion inevitably requires consuming a vast amount of Tokens.”

Consequently, Volcengine clarified internally that more Tokens represent more intelligence. Today, the internal structure of Token growth is also shifting. Wu Di, Head of Intelligent Algorithms at Volcengine, provided an evolutionary roadmap: “By 2027 or 2028, most signals a C-end user receives from an AI assistant will be visual; you won’t see massive blocks of text. By then, LLMs will sink into the underlying logic of the digital world in the form of coding and agents.” In other words, Tokens will become layered. LLMs will move downward to become the foundation, while multimodal visual and interactive Tokens will emerge at the top.

This ensures that Tokens remain a growing and effective metric. Wu Di predicts that by 2030, Token consumption in the domestic market will be more than 100 times what it is today. By then, the core indicator of a company’s intelligence will shift from the number of GPUs it owns to the total Tokens it consumes, as it is the only unified metric that penetrates “model capability, usage frequency, and real demand.”

2. Cloud Infrastructure Must Be Reconstructed Around the Model

If more Tokens mean more intelligence, then Volcengine’s mission—as an “AI Cloud Native” infrastructure born from within ByteDance—is to help enterprise customers better generate the Tokens they need. The traditional cloud computing system is experiencing “discomfort” because it was designed around computing power. Volcengine believes new demands are destined to revolve around models. “The traditional IT architecture of layered IaaS, PaaS, and SaaS is no longer effective. An AI cloud-native architecture centered on the model is forming,” said Tan Dai.

This vision birthed a new suite of systems:

Inference Outsourcing: Companies can host trained models on Volcengine and pay based on actual Token consumption, eliminating the need to build their own inference clusters.

Agent Development Kit (ADK): Fully upgraded to support dynamic runtimes, multi-session sharing, strong identity passing, and built-in toolchains.

Smart Endpoint: Supports model routing, automatically diverting traffic to backends like Doubao, DeepSeek, or Kimi based on performance and cost strategies.

Ark Platform: Further upgraded to allow customers to fine-tune models using RL (Reinforcement Learning) in their own scenarios.

Many details in these products reflect fundamental differences from industry norms. For instance, the ADK’s dynamic runtime design challenges standard practice. While AWS AgentCore starts a separate runtime for every session, Volcengine uses a multi-session shared mode. Tian Taotao, Head of Cloud Infrastructure Products at Volcengine, noted that this is a matter of industry inertia. While a runtime per session worked in the past, it is too “extravagant” for the model era. ByteDance’s internal AI-native needs have long demanded more cost-sensitive designs, which are now being offered to all users.

Volcengine believes people will eventually “leap over” their focus on computing power and come directly for the models. “It’s hard to imagine that five years from now, new entrepreneurs will still be renting GPUs or setting up databases on the cloud. From day one, they will simply ask cloud providers for ‘Tokens’—direct access to model invocations and supporting tools,” said Wu Di.

3. Bridging Model Training and Market Demand

ByteDance and Volcengine now possess a unique combination of assets:

Massive Cloud Demand: Internal support for products like Douyin provides scale effects and cost advantages.

Top-Tier Model Product: Doubao is not just the most-used LLM product; from Volcengine’s perspective, it is the largest Agent product.

Leading Token Volume: 50 trillion/day and climbing.

Unified Model: The Doubao model integrates multimodality and inference to support massive real-world demand.

This full-stack capability is essential for top AI players. Comparing different paths:

OpenAI + Microsoft: Own model, strategic partner’s cloud.

Alibaba Cloud: Open-source models grown on their own cloud, with a recent push on products.

Google: Fully self-developed, closed-source models, with a direct link from research to product.

ByteDance’s path closely resembles Google’s. Doubao corresponds to Gemini—a unified multimodal agent model. Seedance 1.5 keeps pace with (and in some areas exceeds) Veo. Like Google, this technology sits on an AI-centric machine learning platform and cloud service.

A less-discussed similarity is the approach to model technology. After the success of Gemini, Google emphasized the “unification” of models, R&D, and products. Similarly, ByteDance bridges market demand directly into model training. Volcengine has its own algorithm personnel who work in “hybrid offices” and flexible arrangements with ByteDance’s model department, Seed. The market insights gathered by Volcengine directly influence model R&D directions.

This is evident in the Doubao model:

All-in-One Approach: Customers felt there were too many model versions. To reduce selection costs, the model was unified.

Business-Value Benchmarks: ByteDance believes evaluations shouldn’t just rely on public benchmarks but on real business value.

Practical Features: Seedance includes a “Draft” capability so users can preview rather than “pulling a gacha” (randomly hoping for a good result). Meanwhile, features like 128k context—which look good for marketing but have low actual demand—are deprioritized in favor of more robust tool-calling APIs.

“In 2025, over 1 million enterprises and individuals used Volcengine’s large model services across 100+ industries. We found that over 100 companies have accumulated more than 1 trillion Tokens in usage,” Tan Dai shared. This data reflects the success of ByteDance’s demand-led model development.

Summary: The Core of Volcengine’s Tokenomics

More Tokens = Higher Intelligence: Tokens are the yardstick of intelligence; their growth and structural changes guide technical evolution.

Model-Centric Cloud: Traditional cloud vendors have too much inertia. Volcengine’s largest client is ByteDance—a company built on AI. By solving ByteDance’s needs, Volcengine has created a blueprint for the entire industry.

From Raw Material to Intelligent Units: Tan Dai previously described Token-based business models as “primitive.” Now, as Agents become widespread, Tokens will evolve beyond being mere “raw materials.”

Agents will link models together, and cloud platforms will assemble Tokens into Agents that interact with existing workflows. “Discussing Tokens today is like looking at the underlying operating system—it’s an IT budget consideration. But when abstracted into Agents, it can be viewed like BPO (Business Process Outsourcing), which expands the entire market scale,” said Tan Dai. “The ‘10 trillion Agent market’ people talk about is built on this logic.”

Thanks for writing this, it clarifies a lot. The token consumption rates you highlighted are absolutely staggering. How do you see this influencing the training versus inferance balance going forward?